From Org Charts to Outcomes

The New Operating Model for Talent

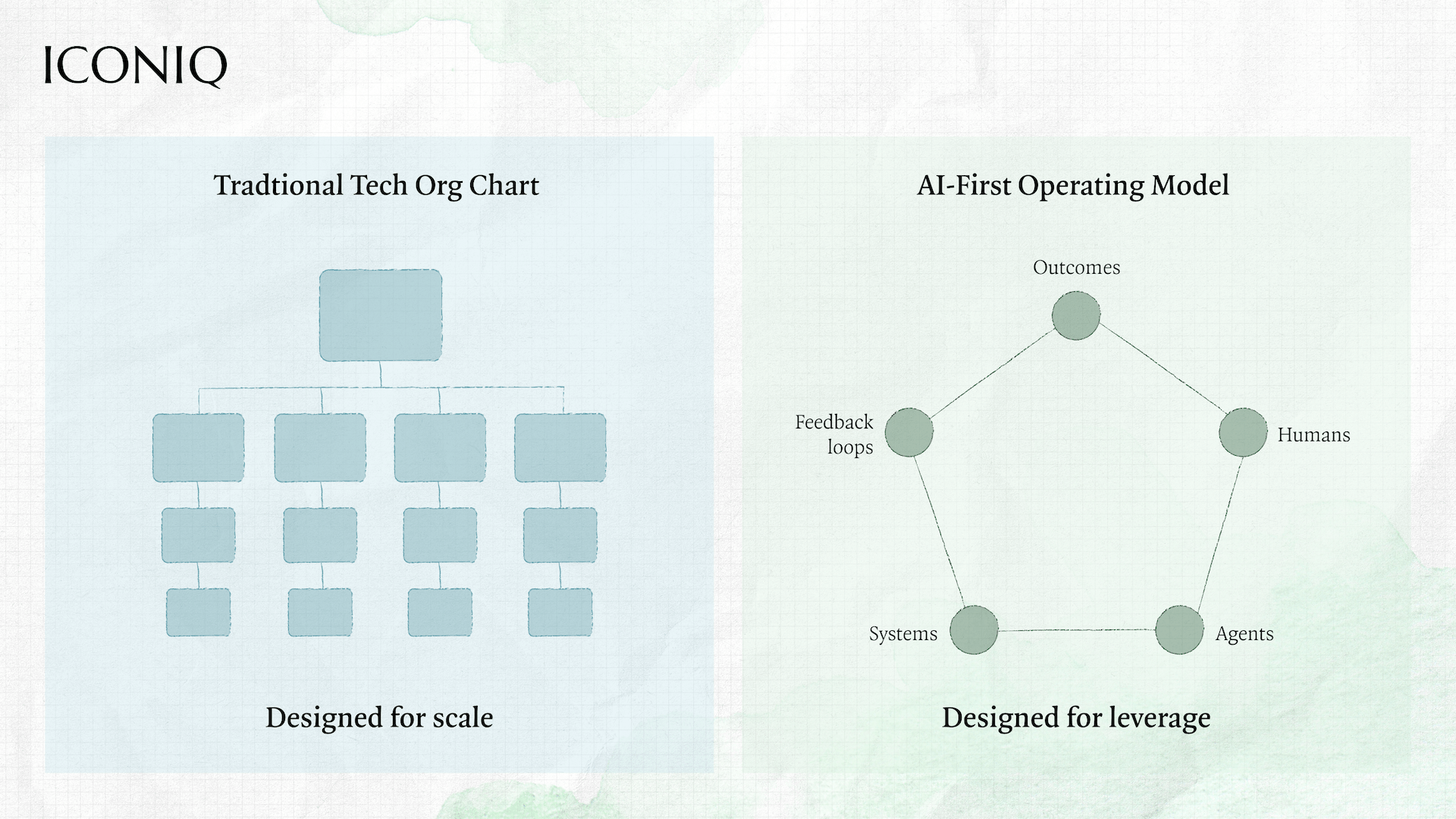

For the last decade, tech companies optimized for scale: repeatable hiring plans, functional specialization, predictable org charts.

We believe the next decade will be defined by something harder to achieve: leverage.

AI didn’t just introduce new tools into the workplace. It destabilized the very logic behind roles, teams, and career paths. Yet many discussions about “AI and the workforce” still orbit around the same questions: productivity gains, cost efficiency, copilots for knowledge workers.

That framing misses the point.

In recent years, we’ve seen a growing divergence between companies that introduce AI into existing workflows and those designed natively around it. The difference isn’t feature velocity, but rather operating architecture.

Increasingly, we find ourselves evaluating not just what a company builds, but how its team is structured to build it. AI-native companies compound differently because their organizations are designed for leverage from day one.

The defining trait of AI-first companies won’t necessarily be how much AI they deploy. In our view, it will be how willing they are to revisit long-held assumptions about what work exists, who should do it, and how organizations should be structured in the first place. After speaking with CEOs, functional leaders, and operators actively navigating this transition, we posit that the workforce of the future won’t look like a cleaner, faster version of today’s org chart. In some cases, it won’t resemble an org chart at all.

What follows are the structural shifts we see emerging across AI-first organizations: not a collection of hot takes, but patterns already underway. Some are uncomfortable. Some are unfinished. Many have yet to stand the test of time. All of them challenge the logic behind how companies have traditionally designed talent, teams, and career paths.

Contributors

Chapters in this Report

The Stable Role Is Dying. Hybrid Is the Default

AI collapses handoffs. When intelligence is embedded directly into workflows, the seams between product, engineering, GTM, and operations start to dissolve.

AI Isn’t Just Creating New Roles. It’s Rewriting the Old Ones

Some of the most profound workforce shifts aren’t showing up as shiny new job titles but instead showing up as scope explosions inside familiar roles.

The Entry-Level Job Is the Real Casualty

In our view, AI’s most immediate workforce impact isn’t mass layoffs. It’s the erosion of apprentice work.

The New Atomic Unit Isn't a Role. It's a Decision.

Rewritten roles are the symptom. The deeper shift is architectural.

Speed Today, Organizational Debt Tomorrow

Many workforce decisions being made in the name of AI optimization are rational in the short term and risky in the long term.

Leadership Is the True Constraint

One of the most consistent insights across our conversations: AI transformation stalls less because of tools, and more because of leadership.

The Stable Role Is Dying. Hybrid Is the Default

AI collapses handoffs. When intelligence is embedded directly into workflows, the seams between product, engineering, GTM, and operations start to dissolve.

That’s why many AI-first companies are experimenting with hybrid, frontier roles like Forward Deployed Engineers, GTM-engineering hybrids, AI solution architects, and internal data enablement leads. These roles sit at the boundary between functions, helping translate real-world problems directly into product behavior.

.svg)

“The stable, specialist role is fading. AI-first teams will be smaller, more end-to-end, and more generalist, with engineers taking on work once owned by PMs and designers. Startups will adapt quickly; large enterprises will feel the friction.”

Patrick Forquer, CRO of Legora, sees this playing out concretely in his own org: "At Legora, sales increasingly looks like product discovery. Agentic/prompt-based selling requires hyper focus on relevant outcomes, so customer success involves continuous value engineering with product team involvement earlier and collaboration with our legal engineering teams."

Some operators see these roles as permanent fixtures – a new class of talent optimized for speed, feedback loops, and ambiguity. One of the strongest signals of this shift isn’t in org charts but in hiring filters. Traditional hiring optimized for predictability. Pedigree, prior role scope, years of experience, brand-name companies – these were proxies for execution reliability inside relatively stable systems. If the job was well defined, the goal was to find someone who had already done it before.

AI-first environments appear to break that logic.

When tools change monthly, when workflows (and products) are rewritten every quarter, and when individuals can spin up prototypes, automations, or copilots on their own, the scarcest resource isn’t experience. It’s learning velocity.

The premium shifts from someone who has done this exact job before to someone who can translate ambiguity into action. HubSpot CMO Kipp Bodnar described a radical simplification of his hiring filter: when candidates reach out, he asks them to send what they’ve built with AI in the past few weeks.

The shift is becoming even more explicit. Wealthsimple recently launched AI Builders, a non-traditional hiring process for a small group of cross-functional builders. No resumes. No minimum years of experience. Instead, candidates are asked to build and demo a working AI system that meaningfully expands what a human can do, not AI layered onto old workflows, but AI-native systems designed from scratch, with clear judgment about where AI should own the work and where humans should stay in control. Submissions are reviewed within 48 hours. Interviews and offers happen the same week.

The signal is unmistakable to us: proof of leverage now matters more than pedigree.

.svg)

“We’ve shifted our hiring from focusing on specific tasks to hiring for curiosity and agility. We are looking for individuals with a builder mentality and the agency to solve problems independently.”

AI Isn’t Just Creating New Roles. It’s Rewriting the Old Ones

Some of the most profound workforce shifts aren’t showing up as shiny new job titles but instead showing up as scope explosions inside familiar roles.

Product managers are becoming system orchestrators, owning not just features, but model behavior, data quality, and user trust. Engineers increasingly own the full model lifecycle, not just code shipping. Designers are shifting from visual interfaces to conversational and behavioral design. Sales and customer success roles are becoming less about execution and more about judgment, context, and relationship management.

.svg)

“The companies that win won’t treat AI as a feature. They’ll redesign every role and workflow assuming intelligence is abundant. The ones that simply automate around the edges will compound more slowly.”

Dennis’s point is a call to action: every role should be re-scoped with AI as a design principle, not an afterthought. But what happens when you actually start doing that work?

The early signal isn’t new org charts. It’s a shift in how work feels day to day: faster cycles, higher expectations, and widening performance gaps. As Kipp Bodnar, CMO of Hubspot, puts it: “The fundamental structure of teams hasn’t fully changed yet, but the way work gets done already has. The biggest shift is in how people work: speed, iteration cycles, and leverage have all changed dramatically. AI is amplifying extremes and leaving the middle behind.”

Some AI-native companies are experimenting with whether hierarchy needs to be visible at all. ElevenLabs has removed formal job titles. Rather than “VP of Product” or “Head of Growth,” employees are simply part of a team - Operations, Go-to-Market, Engineering - with an expectation that they step into the highest-impact work as priorities shift.

Importantly, “no title” does not mean “no structure.” The company still operates with managers, defined roles, levels, compensation bands, and clear decision rights. Leadership and accountability remain intact. What’s changed is not the existence of hierarchy, but the decision not to signal it through labels. The intent wasn’t to eliminate leadership. It was to reduce the subtle gravitational pull of titles toward status, ladder progression, and rigid functional boundaries at a moment when flexibility matters more. Leaders wanted people asking, “Where can I have the most impact right now?” rather than “What does it take to become a ‘Head of’?”

It's unclear whether a lighter-weight approach to titles holds as companies scale and governance complexity increases. But we believe the experiment reflects something deeper: AI-native companies are questioning which parts of traditional hierarchy are essential and which are artifacts of a slower, more siloed era. The answer to that question lives not in the org chart — but in what the work actually requires.

The Entry-Level Job Is the Real Casualty

In our view, AI’s most immediate workforce impact isn’t mass layoffs. It’s the erosion of apprentice work.

When execution becomes cheap, fast, and automated, entry-level roles that once existed to build judgment through repetition start to disappear. Many leaders privately admit they’re unsure how future talent will develop if early-career “learning by doing” no longer exists at scale.

Some view this as a temporary gap. Others believe the traditional career ladder is fundamentally broken.

.svg)

“It's hard for young knowledge workers to break into the industry right now. I'm seeing talented young computer science new grads who can't even get an interview. The notion of replacing entry level workers with AI is short sighted and has the potential to create a pipeline gap in skilled workers we're still going to need. This is an area where the hype, or even the short-term cost saving reality, could be leading us astray.”

What’s missing today is a coherent answer to a simple question. If AI removes the work through which people used to learn, where does learning happen now?

Until companies intentionally redesign early-career development, AI risks helping create a workforce optimized for the present, but dangerously thin in the future.

The New Atomic Unit Isn't a Role. It's a Decision

Rewritten roles are the symptom. The deeper shift is architectural. When AI absorbs coordination, synthesis, and execution at scale, the scarce resource inside any organization stops being capacity and starts being judgment — the ability to define the right problem, form a testable hypothesis, and decide what happens when the result comes back. AI-first companies aren't organizing around functions anymore. They're organizing around where that judgment lives, who holds it, and how quickly it can be applied.

One of the subtler but perhaps more radical expressions of this shift can be seen in how organizations think about capacity. We're seeing smaller teams reach product depth and revenue milestones that previously required far more scale. When leverage is built into the operating model, capital efficiency becomes structural rather than episodic. Work is scoped, decomposed, and assigned across a mix of humans and agents. But the more important question isn't how many agents a company deploys. It's what kind of human thinking sits alongside them — and whether the org is designed to surface it.

.svg)

“We’re moving from units of humans to units of work. The question isn’t how many people you have. It’s how efficiently the work gets done. Agents will increasingly be treated like employees; we’ll hire and fire them, give them seats, licenses, and scope ownership. ”

Here's a hypothesis worth stress-testing: the roles that survive and compound aren't necessarily defined by function. They could be defined by a way of working. Scope the problem. Form a hypothesis. Build or test something. Measure. Iterate. This pattern has historically lived inside engineering — but that's less about technical skill and more about epistemic discipline: the trained habit of operating under uncertainty, decomposing ambiguity, and treating assumptions as things to be tested rather than inherited. That cognitive pattern is increasingly becoming the baseline expectation across every function.

Which raises the harder question: which roles are being hollowed out? The uncomfortable answer is that the exposed roles aren't necessarily the lowest-skilled. They're the ones where a disproportionate share of current work is coordination, synthesis, and reporting — regardless of seniority. Traditional program managers, mid-level marketing managers, and business analysts fit this profile. Not because their judgment is worthless, but because AI doesn't just assist those tasks. It renders the role-as-currently-defined redundant, while leaving the underlying judgment capability in need of a new container.

The roles being rewritten rather than eliminated are the ones where human judgment is load-bearing but currently buried under execution. Customer success is a clear example. Today it's largely reactive and process-heavy. In an AI-first model, it becomes something closer to a value engineer: someone who can diagnose why a product isn't delivering outcomes, form a hypothesis about what needs to change, and influence product direction. That's not a CS role. It's applied judgment with a commercial mandate.

Dennis Lyandres, ICONIQ GTM Advisor and Former CRO of Procore, puts it plainly: “AI doesn’t just change productivity; it changes the unit of work. The highest-performing teams will be smaller, flatter, and denser — fewer people with broader scope and more agency. The leadership challenge isn’t control; it’s clarity. When leverage increases, supervision matters less than alignment, incentives, and shared context. Companies that redesign roles around that reality will compound differently than those that simply automate yesterday’s org chart.”

But this model also introduces fragility. What happens when institutional knowledge concentrates in a small number of high-agency individuals? When compensation frameworks collide with 10x productivity differentials? AI-first orgs may be flatter and faster — they are not automatically more resilient. In systems optimized for leverage, culture becomes infrastructure. And the leaders who recognize that early are the ones who will be able to scale it.

Speed Today, Organizational Debt Tomorrow

Many workforce decisions being made in the name of AI optimization are rational in the short term and risky in the long term.

For example, forward-deployed engineers accelerate learning but can mask product gaps. Lean teams move quickly but can quietly accumulate fragility. Over-indexing on immediate leverage can starve investments in platforms, governance, and scalable foundations. The tension isn’t whether these moves work. It’s whether they compound.

.svg)

“Forward deployed engineers aren’t really a new role - they’re a new label for something companies have always done when the product isn’t ready yet. They can be incredibly effective in the short term, but the risk is that you end up hard-coding bespoke solutions into the org. If most of what FDEs build never turns into repeatable product or durable process, you’re not accelerating learning - you’re quietly accumulating tech debt.”

Rob’s point is less about FDEs specifically and more about a pattern: when speed outpaces system design, customization masquerades as progress. The organization feels more productive, but beneath the surface, complexity compounds.

A similar dynamic is playing out in how companies talk about AI itself. Matt Eccleston, ICONIQ Technical Advisor and Former VP Growth of Dropbox, urges precision: "One of the most overhyped ideas is that AI will fully replace anything. It will substantially accelerate certain types of work, but at the end of the day it's a tool and will need a highly competent human operator for the foreseeable future. My prediction is that AI is going to stop being such a catch-all term in the next couple years. We will talk about LLMs and we'll talk about synthetically generated video/audio/images, and we'll stop confusing the world by lumping these things under a label with the word 'intelligence' attached to it."

Matt’s caution reframes the debate. If AI is a tool, not an autonomous replacement for judgment (at least just yet), then workforce design should optimize for durable human capability, not assumed human redundancy. Overestimating AI’s autonomy can be just as dangerous as underinvesting in foundational systems.

Taken together, these perspectives expose the real risk: mistaking acceleration for transformation.

AI can help you close deals faster, ship experiments quicker, and automate manual tasks. But if those gains aren’t converting into repeatable product, scalable systems, and deeper institutional knowledge, you’re not building leverage, you’re building organizational debt.

Leadership Is the True Constraint

Perhaps one of the most consistent insights across our conversations: AI transformation stalls less because of tools, and more because of leadership.

In AI-first companies, leaders cannot outsource understanding. Instead, they may actively use the tools, grasp the constraints, and operate in constant learning mode. Credibility increasingly comes from fluency, not delegation. As Diane Adams, former Chief Culture & Talent Officer of Sprinklr and ICONIQ advisor notes, this shift also raises a deeper leadership challenge: “When you give people more agency and judgment, emotional intelligence becomes central. Leaders must create trust within the team. Integrity becomes the operating system.”

.svg)

“As leaders, you need to have the ability to surface real-time data and insights AND make quick decisions vs the ‘wait and see’ traditional SaaS approach.”

This expectation is uncomfortable, especially at scale. It favors leaders willing to relearn, experiment publicly, and abandon old markers of expertise.

But the alternative could be worse: leaders designing organizations around a future they don’t personally understand.

Kipp Bodnar, CMO of Hubspot, puts the challenge bluntly: "If you're not learning and using these tools yourself as a leader, you're already behind. You can't ask your team to do work you're unwilling to do."

While many leaders focus on technical fluency, Diane Adams argues the real differentiator is human capability. If AI-first organizations are designed around leverage, then AI-first leadership should be designed around clarity: clarity of outcomes, clarity of culture, and clarity of expectations.

.svg)

“The AI leader isn’t defined by hard skills. In many ways, it’s the opposite. As complexity increases, leadership must become more human-centric. Clear vision. Clear outcomes. Integrity that people can trust. Emotional intelligence in decision-making becomes more important, not less.”

The Middle Ground Some Companies May Miss

Not every role will change. Not every hybrid will last. Not every company needs to look like a frontier lab.

But one of the most dangerous assumptions leaders can make right now is that AI only changes how fast work gets done, rather than what work exists at all.

AI-first companies are not simply compressing timelines or cutting costs. They are rethinking the atomic units of work: which decisions require humans, where judgment lives, how learning happens, and what an organization is actually optimized to produce. Org charts, job titles, and career ladders are downstream artifacts of those choices, not the starting point.

Those choices will help determine whether AI becomes a layer on top of the business, or the foundation beneath it. These companies may also be the ones willing to confront uncomfortable questions early:

- Which roles exist today only because work used to be scarce or slow?

- Where are we accumulating organizational debt in the name of short-term leverage?

- And if this is the last time we get to redesign how people learn, grow, and create value at scale, what do we do differently now?

In the next decade, we believe the advantage won’t come from scaling headcount or perfecting yesterday’s operating model. It will likely come from leaders who are honest about which assumptions no longer hold, and bold enough to rebuild their workforce around outcomes, learning velocity, and leverage before the market forces their hand.

Many of these ideas are still being tested in real time, and the right answers are far from settled. If you’re experimenting with new talent models, have contrarian views, or are seeing patterns we missed, I’d love to hear from you. You can reach me at Vivian Guo vguo@iconiqcapital.com